The robots.txt file lives within a website’s directory and provides bots with rules for which pages or files should be accessed. It operates as a set of guidelines that website owners use to control how search engines and other bots interact with their websites.

Why the robots.txt file is important

The Robots.txt file directly impacts an organization’s search engine optimization and page ranking on search results. Legitimate scrapers reference the Robots.txt file every time they scrape and index content. Additionally, popular search engines like Googlebot also have what’s known as a Crawl Budget, and the Robots.txt file directly impacts it. A Crawl Budget refers to the number of pages a web scraper will index in a given time period. As a website may feature more pages than its crawl budget will account for, robots.txt files enable organizations to prioritize crawling their most relevant pages and exclude duplicate or non-public ones.

Malicious scraper bots do not adhere to robots.txt guidelines and oftentimes attempt to access parts of the website explicitly prohibited within it. Think of robots.txt files as lines in the sand drawn to keep legitimate bots in check. While you can follow its guideline, crossing it is merely an ethical decision and not restricted by the robots.txt rules themselves in any way. However, applications typically run other security tools enabling them to add protection to their application and its sensitive files or directories. Many security tools pay close attention to the robots.txt files as they can be used as a honeypot to catch malicious bots on their applications quickly!

How to create a robots.txt file

There are a few points to keep in mind when creating robots.txt files:

The file must be located at the domain's root, and each subdomain needs its own file.

The robots.txt protocol is case-sensitive.

It’s easy to accidentally block crawling of everything, so be sure to understand a command’s syntax before implementing it:

Disallow: / means disallow everything.

Disallow: means disallow nothing, which will allow everything.

Allow: / means allow everything.

Allow: means allow nothing, which will disallow everything.

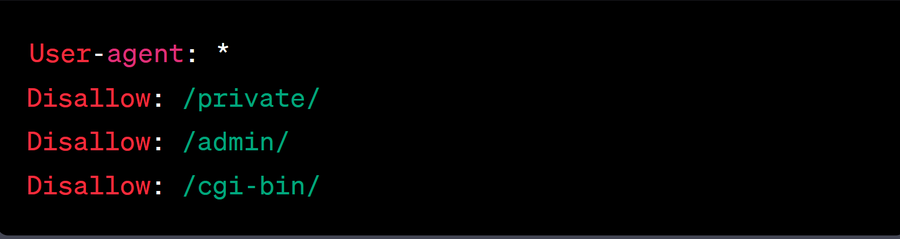

Here's an example of a basic robots.txt file:

In this example, the asterisk (*) in the "User-agent" field denotes that the rules apply to all web robots. The "Disallow" code specifies the directories or files that should not be crawled by the robots, and "/private/," "/admin/," and "/cgi-bin/" are the disallowed directories specified. Using this logic, admins can outline exactly where bots shouldn’t crawl, and they can extend it to as many locations as desired.

The robots.txt file enables organizations to decide which pages a crawler can access, but it can also limit how fast a crawler operates. The crawl delay is an unofficial directive organizations can use to limit the number of requests a crawler makes in a time period. Doing so limits a crawler’s ability to overwhelm a server, and organizations can implement a crawl delay for one particular crawler or all that support the directive. It’s important to note that while search engines like Yahoo and Bing will follow this unofficial directive, others (like Googlebot) will require adjustments in their unique consoles to achieve the same result.

Summary

Website owners create the robots.txt file to guide bots on their applications. While legitimate bots use this information to learn what pages to crawl, malicious scraper bots disregard it and crawl wherever they please. Learn more about the types of bots that robots.txt files impact here.