With Fastly, you have the freedom and flexibility to completely customize your caching settings. From respecting Cache-Control headers sent from your origin servers to allowing you to granularly set rules for any object or set of objects that flow through the CDN, we give you full control.

We've worked hard to set 'sane' default caching behaviors that improve performance right out of the box. These rules include ensuring that private content doesn't get cached as well as using moderate time-to-lives (TTLs) in the event that we see no Cache-Control mechanisms being sent from origin.

There are some application servers that will send Set-Cookie or Cache-Control: private headers by default on all objects. Doing this will prevent these objects from caching, occasionally causing a few customers to tell us that they don’t see improvements after setting up their Fastly service.

The easiest way to fix this problem is by modifying the headers that the origin servers send on objects. But if that’s not possible, here are some tips that could help fix these issues.

Configuring Cache-Control At The Origin

By default, Fastly will pass requests that have the headers Set-Cookie or Cache-Control: private. Cache control private and set cookies are often used for personalized data, such as shopping carts on ecommerce sites or other personal data.

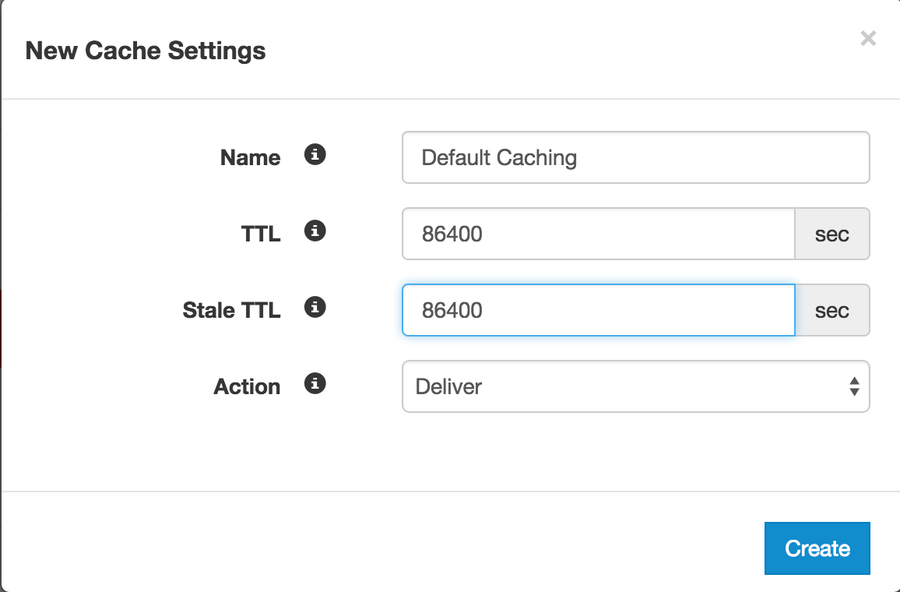

Many customers commonly pass requests for other Cache-Control headers. For example, Cache-Control: no-cache, no-store. These are caching directives that are used to influence browser behavior, not the caching layer. You might want to cache something for a long time on Fastly’s edge servers, but you don’t want browsers to cache that content because you expect that content to change, but don’t know when (e.g. news headlines). Our recommendation is to handle this by configuring Cache-Control at the origin. If you can’t, you can add this rule into your Fastly configuration through a cache setting (under Settings → Cache Settings).

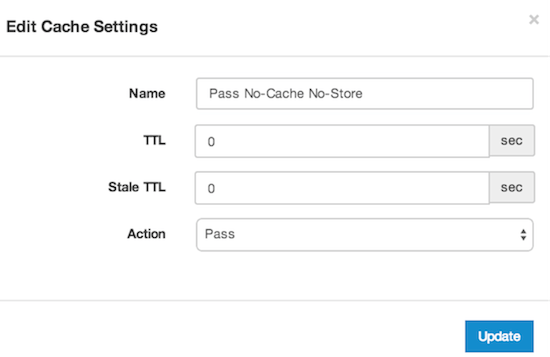

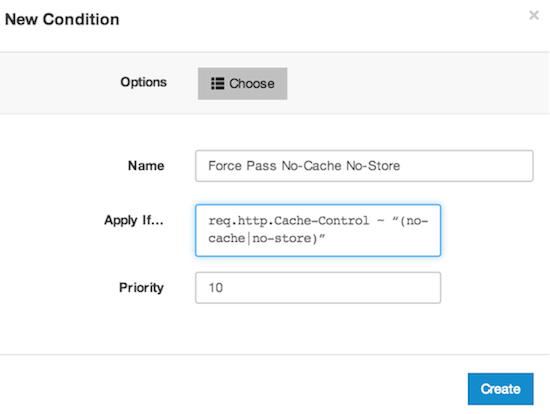

First, create a new cache setting with the = PASS and TTLs of zero. Then, add a condition to the new rule to execute it: Cache-Control ~ “no-cache” || Cache-Control ~ “no-store”.

Caching Other HTTP Responses

Fastly will cache certain HTTP responses automatically. These responses include 200, 203, 300, 301, 302, 410, and 404. Maybe you don’t want the same cache level (TTL) for each response, and you’re also thinking of caching other HTTP responses. Again, cache settings to the rescue. This time, your condition will be based on the status code.

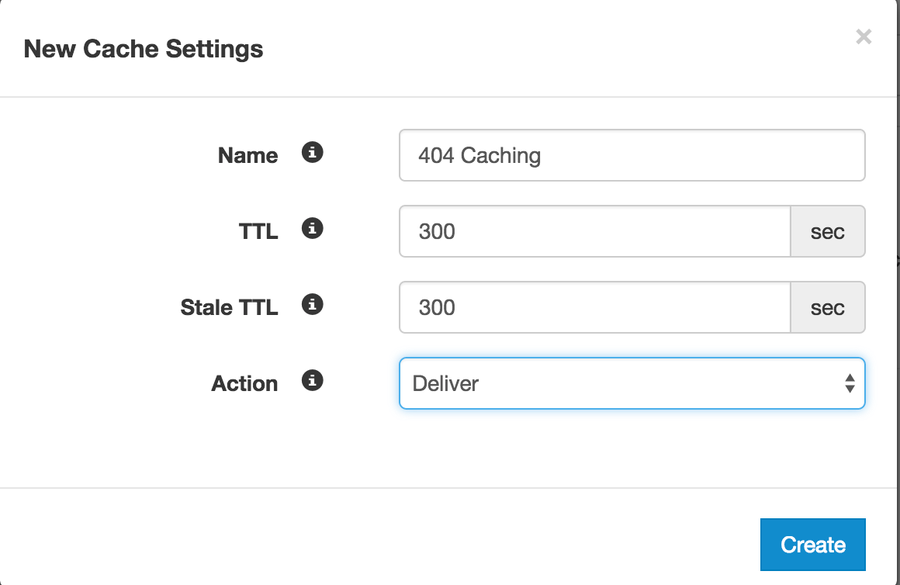

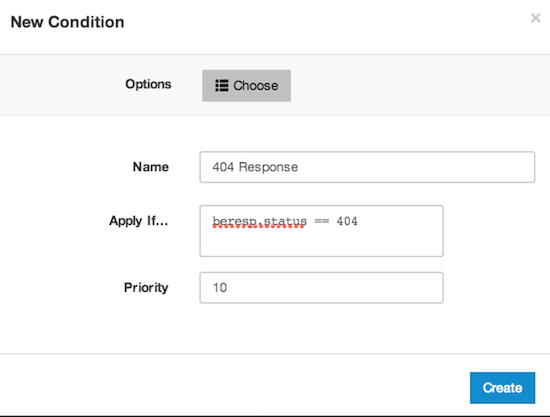

For instance (under Settings → Cache Settings), add a 404 caching setting with a 5 minute (300s) TTL. To execute this rule, add the condition (beresp.status == 404).

Default Caching Rules

Fastly will honor the TTL settings sent from our customers’ origin in a Surrogate-Control, Cache-Control, or Expires header. If the origin does not send any of these headers, Fastly has a default TTL of 3600s (1 hour) established. Some customers might not realize this rule exists, and might have created their own default cache setting.

We wanted to give customers the flexibility to execute cache settings before running our default rules. If you have a setting with = DELIVER and without a condition, you will see this code block in your VCL near the top of vcl_fetch:

# Default Caching

set beresp.ttl = 86400s;

set beresp.grace = 86400s;

return(deliver);The important line in this code block is return(deliver), meaning that before Fastly can run through the rest of vcl_fetch, you have already exited and delivered the content to the user. This is important because you may be serving private content (with Cache-Control: private or Set-Cookie). However, this is how you would override application servers that add Set-Cookie headers to all objects, including public ones.

Next Steps

At Fastly, we’ve worked to give our customers full control to achieve their ideal caching scenario. To learn more about ways to use Cache-Control headers, check out our series on API Caching. If you have any questions, please reach us by emailing support@fastly.com.