Websites still load too slowly. During the most critical time in the page load lifecycle, your connection is often almost totally idle. A new technology proposed by Fastly engineer Kazuho Oku hopes to make better use of that critical first couple of seconds.

Have you ever gone to a site on your phone only to end up staring at a page with no text for 10 seconds? No-one likes to sit staring at a blank screen while a site’s custom font loads, so it makes sense for something as critical as that to be loaded as soon as possible. Link rel=preload was intended to be part of the solution to this problem. The browser parses a page’s HTTP headers before starting to read any of the content, so this is an ideal place to tell the browser to start downloading an asset that we know that we’re going to need.

By default, the web is slow

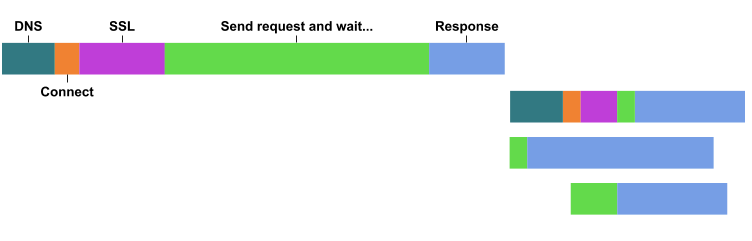

Let’s look at what happens if you don’t use preload. The browser can only start loading resources after it’s discovered that it needs them, which happens fastest for resources that are in the HTML during initial parsing of the document (such as <script>, <link rel=stylesheet> and <img>).

Slower to download are the things you find after building the render tree (this is where you work out that you need a custom font, but to figure this out you first need to load the stylesheet, parse it, build the CSS object model and then the render tree).

Even slower than that are resources that you add to the document via JavaScript loaders, which are triggered by an event like DOMContentLoaded. Putting all this together, you see a waterfall that is unoptimised and relatively nonsensical. There’s a ton of idle time on the network, and resources load either earlier than necessary or way too late:

Link rel=preload helps a lot

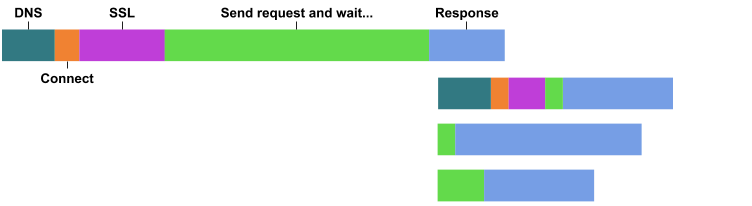

Now, in the last few years we’ve been able to do better by using Link rel=preload. For example:

Link: </resources/fonts/myfont.woff>; rel=preload; as=font; crossorigin

Link: </resources/css/something.css>; rel=preload; as=style

Link: </resources/js/main.js>; rel=preload; as=scriptWith these in place, the browser can start loading the resources as soon as the headers are received, and before starting to parse the HTML body:

This is a substantial improvement, especially for large pages and for critical resources that will otherwise be discovered late (notably fonts, but anything can be a critical resource, like a data file required to bootstrap a JavaScript application).

However, we can do better than this. Between the moment that the browser finishes sending the request, and when it receives the first bytes of the response, it’s not doing anything (the big green section on the initial request above).

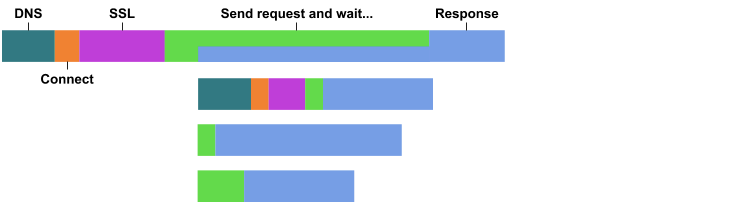

Making use of the ‘server think’ time with Early hints

The server, on the other hand, is really busy. It’s generating the response and deciding whether it’s successful or not. After some database access, API calls, authentication and whatever, you might decide the correct response is a 404 error.

Sometimes the server think time is less than the network latency. Sometimes it can be substantially more. But the important thing to realise is that they don’t overlap. While the server is thinking, we’re not sending any data to the client.

Interestingly though, even before you start thinking about generating a response, you probably already know some of the styles and fonts that need to be loaded to render the page. After all, your error pages generally use the same branding and design as your normal pages. It’d be great if you could send those Link: rel=preload headers before the server does its thinking. This is what Early Hints is for, specified in RFC8297, a product of the HTTP Working Group authored by my Fastly colleague Kazuho Oku. Witness the magic of multiple status lines in a single response:

HTTP/1.1 103 Early Hints

Link: <some-font-face.woff2>; rel="preload"; as="font"; crossorigin

Link: <main-styles.css>; rel="preload"; as="style"HTTP/1.1 404 Not Found

Date: Fri, 26 May 2017 10:02:11 GMT

Content-Length: 1234

Content-Type: text/html; charset=utf-8

Link: <some-font-face.woff2>; rel="preload"; as="font"; crossorigin

Link: <main-styles.css>; rel="preload"; as="style"The server can write the first, so-called “informational” response status as soon as it receives the request, and flush it to the network. Then, it can get to work on deciding what the real response will be, and generating it. Meanwhile, in the browser, you can start the preload much earlier:

This is going to require some changes to the way browsers, servers and CDNs work, and some browser vendors have expressed some reservations about difficulties of implementation, so it's still unclear when this might ship. You can track its progress in the public trackers for Chrome and Firefox.

Eventually, we hope that you’ll be able to emit Early Hints directly from Fastly, whilst still sending the request to origin. We haven’t decided how to expose it in VCL yet, so let us know how you’d like to see it!

But what about HTTP/2 Server Push?

With HTTP/2, you get a new technology called Server Push that also seems to solve the same problem as sending Link rel=preload in an Early Hints response. While push does work (and you can even generate custom pushes from the edge with Fastly), there is substantial opposition to it on a few grounds:

The server doesn’t know whether the client already has the resource, so will often push when it doesn’t need to. Due to network buffering and latency, the client doesn’t usually have an opportunity to cancel the push before receiving the entire content. (Though there is a possible solution to this problem, in the form of the proposed Cache Digest header, which Kazuho is working on with Yoav Weiss from Akamai).

Pushed resources are connection-bound, so it’s easy to push a resource that a client ends up not using because it attempts to fetch it over a different connection. Clients may have to use another connection because the resource is on a different origin that doesn’t share the same TLS cert, or because it is being fetched in a different credentials mode.

H2 Push is not very consistently implemented, comparing the major browser vendors. That makes it hard to predict whether it will work or not in your particular use case.

Setting these aside, Early Hints and Server Push offer different trade offs. Early hints provides a more efficient use of the network in exchange for an additional round trip. If you anticipate a short network latency and a long server think time, early hints is easily the right solution.

However, that’s not always the case. Let’s be optimistic and imagine humans will be on Mars sometime soon. They will be browsing the web with 20-45 minutes of latency per round trip, so the additional round trip is exceptionally painful, and the server think time is inconsequential in comparison. Here, server-push easily wins. But if we do ever browse the web from Mars, it’s more likely that we’ll download some kind of packaged bundle, such as imagined by the current web packages and signed exchanges proposals.

Side benefit: Faster request collapsing

While the primary use case for Early Hints is in the browser, there’s an interesting potential benefit for CDNs as well. When Fastly receives lots of requests for the same resource at the same time, we normally send only one of them to origin, and put the others in a wait queue, a process known as request collapsing. If the response we eventually get back from the origin includes Cache-Control: private, we have to unqueue all the queued requests and send them all to origin individually, because we aren't allowed to use that one response to satisfy multiple requests.

We can’t make that decision until the response to the first request has been received, but if we supported Early Hints, and the server issued an Early Hints response containing the Cache-Control header, we’d potentially know much earlier that the queue cannot be collapsed into a single request and instead we could dispatch all the queued requests to origin immediately.

Ordering less critical content with priority hints

Early hints is a great way to get access to some of the most valuable real estate on the waterfall: when the network is idle, the user is waiting, there’s only one request in flight, and there’s nothing on screen. But once the HTML is loaded, and the page is parsed, the number of resources that need fetching explodes. Now the important thing is not loading things as quickly as possible, but loading them in the right order. Browsers will use a bewilderingly complex array of heuristics to determine loading priority by themselves, but if you want to override their verdict, you may in future be able to do that with priority hints:

<script src="main.js" async importance="high"></script>

<script src="non-critical-1.js" async importance="low"></script>With this new importance attribute, developers can control the order in which resources will be downloaded if there is competition for the network. It’s also possible that low importance resources might be deferred by the browser until the CPU and network are idle or for other reasons of the device’s choosing.

Can I use them?

Neither Priority Hints, nor Early Hints, are shipping today. H2O, the HTTP/2 server used and supported by Fastly, has started to land (see PRs 1727 and 1767) support for Early Hints, and there is an intent to implement priority hints in Chrome, as well as active tracking of 1xx responses by the Chrome team to provide data on adoption. In the meantime there’s no harm in starting to send early hints and if you want to get ahead of the curve, go right ahead!