This is the first in a series on Lean Threat Intelligence. Check out Part 2 and Part 3.

My role at Fastly is to help architect our Threat Intelligence program — in this post, I’ll discuss how you can draw from open source resources to build a lean and powerful plan for your organization. This architecture needs to provide evidence-based knowledge* so that we can filter relevant security signals from the billions of connections we handle per day. Open source is a great resource — you can test stacks out quickly without committing to anything long term, participate in an active community of developers working on a shared problem, and lastly, it’s free! Afterwards, you can identify pain points where refinement might be necessary. At the same time, you stay lean in your planning phases and collect useful metric-based data along the way.

Standing on the shoulders of giants

The great thing about the open source and security communities is that there are a ton of resources via blog posts, conference presentations, and papers. Rebekah Brown, a threat intelligence manager at Rapid7 who presented at The Cyber Threat Intelligence Summit @ SANS, shed light on doing Threat Intelligence to support achieving deep security goals effectively.

When discussing threat intelligence, Brown highlighted the importance of actively using open source tools to satisfy the needs of security organizations. Particularly, Brown pokes fun at the ease-of-access to open source tools (and your friends who use them):

The main takeaways from this slide and her presentation as a whole is that there is a myriad of open source tools, knowledge, and data available to help you get started in Threat Intelligence. But, before you even download one tool, it’s important to get an understanding of organizational needs to develop a plan.

Consumers, AKA the target users

It’s important to know the day-to-day functions of your target users before you try to start solving a problem for them. Work with them to list their responsibilities and needs, and use this list as a driver to make user stories,** which are a great way to establish a contract between you and customers of your system. As long as your system upholds and completes the user story contracts, then you’ve made something useful! Sounds easy right? User stories are typically written like:

As a , I want so I can .

This kind of story planning and business logic is used frequently in test-driven development, where you use programmatic language to define roles, functions, and benefits in a test. You write the tests, then they fail (because there is no code to make them pass), then you write the code to make them pass. Top-down approaches like this help you consider the input and output to the system rather than the intricate details, and it can help drive technology selection decisions quickly before they become a problem.

Now imagine a situation where a security organization in a company needs Threat Intelligence to augment research, incident response, and application security. The following user stories could help drive this project:

As a security operations member,I want a platform where I can issue queries for logs and receive information on internal telemetry,so I can perform triage and remediation in the case of a security event.

As a security operations member,I want automated alerting on log events,so that I can identify threats as quickly as possible and create a security event.

As a security services company,I want to ship net-new security data into my edge technologies,so I can secure my customers data and infrastructure.

As a security researcher,I want to use threat intelligence feeds against my log management system,so I can correlate the incoming indicators to see if a security event has happened.

These stories include benchmarks that can drive conversations across multiple teams, helping them to frame their issues and what they need to fix them. Most of the time, customers want the problem solved without too much emphasis on how you solve it. Understanding the high-level problems in user stories helps you focus on prioritized issues and not let the intricacies of system design cloud the overall goals of the project.

The systems diagram

Given the stories listed above and (hopefully) buy-in from interested parties, you can now start sketching out how this system would work. Security operation members want automated alerting on internal telemetry, meaning the system requires a combination of stream and batch processing, and the team can alert on one atomic event or a collection of events. Simultaneously, the Security Research Team can also use the same types of processing to augment searching for the operations team. Lastly, the company as a whole wants this same system to interface with edge technologies to help protect their customers.

It’s important to notice that there is still no design in place, and the technologies should not matter at this point. We just know that we need log aggregation, correlation, alerting, and the ability to apply heuristics to data.

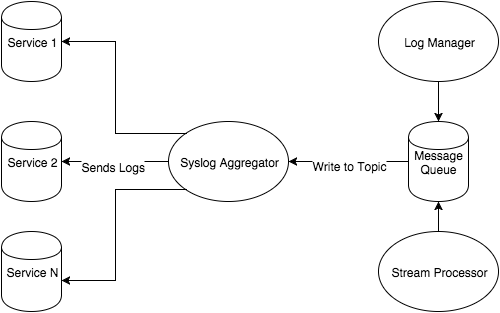

Whenever I architect a system, I like to create a systems diagram that shows information flow and dependencies. Information flow can be left to right or up to down, as long as it makes sense to whomever you’re explaining it. Arrows show dependencies between isolated systems, so if an arrow goes from B -> A it means B depends on A to perform its function. Below is a possible systems diagram for our log management system:

There are a number of services that produce logs, and there’s already a syslog aggregator. This aggregator depends on these services to forward logs to the server so it can retain them for later use. This system was already in place and working when I arrived at Fastly, and it’s much easier to build a system around currently implemented technologies than to strip them out and create new ones.

The new portion of this system includes a log manager and a stream processor. The log manager will help solve stories 1 & 2 and both the log manager and the stream processor will help solve stories 3 & 4. I included a Message Queue in the pipeline, because I know that in order to perform stream processing and log management, I would rather have something that can manage a high volume of logs but also be able to have multiple consumers of those logs. All three of these components allow for the different processing, retention, and alerting requirements. Also, there is open source software for virtually everything in this diagram, so we’re off to a great start.

Moving forward: the plan + budget

It’s important when designing any system to understand the issues at hand and plan accordingly before you get started. Both technology and security industries are notorious for obsessing over a specific problem — both startups and well-established companies alike can fall victim to throwing money at these problems without considering customer needs and being lean with their time. This creates technical debt in terms of being entrenched in a certain technology, contract terms, or sunk costs, it can make redesign nearly impossible, and makes your engineers unhappy. (Nobody likes an unhappy engineer!)

Read Part 2 to learn how we select technology for Lean Threat Intelligence, including Niddel Chief Data Scientist Alex Pinto’s tools and data science techniques to evaluate intelligence feeds (who, along with Rolf Rolles, will be giving talks at our first Security Speaker Series on February 25). Stay tuned.

Footnotes:*Since I graduated from college I’ve seen a lot of job and product listings for “threat intelligence.” Security, as an industry, has a hard time defining it and companies love spending money on it. Gartner defines threat intelligence as “vidence-based knowledge, including context, mechanisms, indicators, implications and actionable advice, about an existing or emerging menace or hazard to assets that can be used to inform decisions regarding the subject's response to that menace or hazard.” I have never heard of a security threat called a hazard or menace before, but I do agree with Gartner on their description of evidence-based knowledge. The hard problem here is defining what it means to have things like context, mechanisms, indicators, and advice in threat intelligence.

**Read more on user stories and agile development here.