Due to its approachable codebase and clean abstractions, id Software’s DOOM has become one of the most ported games in history. It felt like a perfect project to port to Compute, built on our serverless compute environment, to experiment with different applications of the product.

Showing that DOOM could run interactively on Compute would be a way to push the boundaries of performance on the product and have a tangible demo that we could point to as a stake in the ground — showcasing the exciting possibilities Compute presents. Let’s explore how we did it.

A brief history of DOOM

DOOM was a game developed in 1993 by id software and released in December of that year. Id software had made a living developing high-quality 2D games, but with Wolfenstein in 1992 and then DOOM the following year, they made a historic leap into 3D, taking advantage of the quickly evolving PC hardware landscape to push the boundaries of the industry.

DOOM was open sourced in 1997 with the README carrying the words, “Port it to your favorite operating system”. Many fans did just that, with DOOM being ported to hundreds of platforms, from the obvious to the obscure. As both a fan of DOOM and an employee of Fastly, I wanted to test the potential of Compute. Here’s how I brought this iconic video game to Compute.

Worth noting: the “Game Engine Black Book” by Fabien Sanglard is a fantastic resource that was referred to frequently during this project. This, and his other book about Wolfenstein, are well-researched deep dives into key moments of game development history and are entertaining and educational.

Porting

The strategy I took for porting DOOM was a two-step process:

Get the platform-independent code (i.e. code not relying on any specific architecture/platform syscalls or SDKs) compiling and running. This is the bulk of what most would consider the “gameplay.”

Replace platform-specific API calls as necessary for the target platform. This is code that mainly deals with input and output, including rendering and audio.

There is no official public interface for C bindings, so to try at home, you’ll have to deduce the C APIs from the fastly-sys crate.

Common code

Getting DOOM running without rendering or audio on Compute was fairly straight forward. The codebase has prefixes on every function name, and it conveniently uses “I_” for all the implementation specific functions, so it was easy enough to go through the codebase and remove these from compilation. Once that was done, I used wasi-sdk to target a Wasm binary. WebAssembly is designed to compile native code without much fuss, so this change was very straight forward.

The fixes I needed to make to get the game running as a WebAssembly binary were related to DOOM being developed in a time of 32-bit computing. There are a number of places where the code assumes pointers were 4 bytes, which, at the time, was a perfectly reasonable choice to make. Data in DOOM is loaded from a file that contains all the assets created by the development team and bundled together at release time. This data is loaded directly into memory and cast to the in-game C structure that it represents. If there are any pointers involved in these structures, loading the data in a 64-bit environment would result in the data not overlaying properly on the structure, thus resulting in unexpected behaviour. These were fairly straightforward to track down, and would result in fairly obvious crashes initially.

Game loop changes

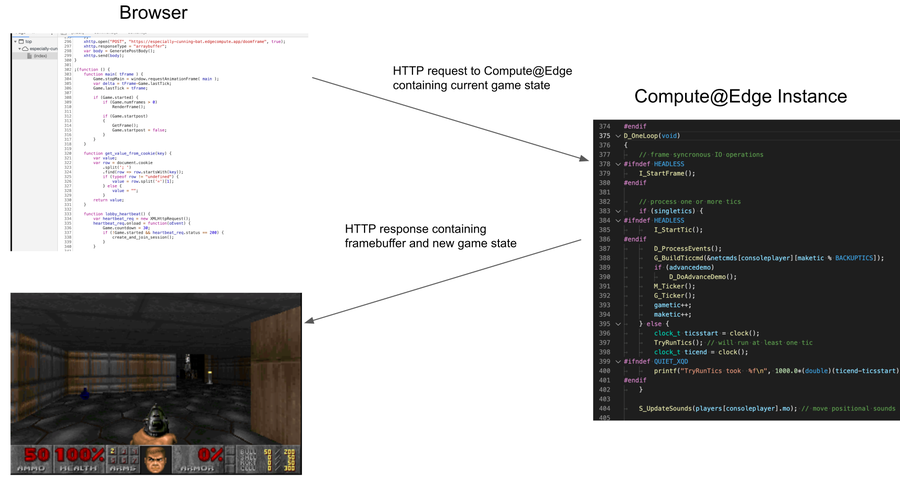

In order to get the common code running on Compute, I had to refactor the traditional game loop that DOOM employed. A typical game will initialize and then run in an endless loop, doing an input->simulation->output tick over and over at the desired frequency, taking inputs from the local input devices such as a keyboard, mouse, or controller, and outputting video and audio. On Compute, however, a process like this will eventually be evicted by the platform, since the intent is for the instance to start up, do some work, and then return to the caller. I thus removed the loop entirely and changed the instance to only run a single frame of the game.

The overall result looked something like this, run in a loop:

In the following sections, I will go into more detail about each of these steps.

Output

In video games, the memory that holds the final image that is displayed to the player is called the framebuffer. In modern games, the framebuffer is often constructed in specialized GPU hardware, as the final image is often the result of running a number of pipeline steps on the GPU. In 1993, however, rendering was done in software, and in DOOM, the final buffer was available to the programmer in a basic C array. This design made porting DOOM to new platforms relatively painless, as it gave porting developers a simple, understandable starting point to work from.

In the case of Compute, I wanted to return the framebuffer to the player’s browser where it could be displayed. This was as simple as using the C API to write the framebuffer to a response body and then send that body downstream:

// gets a pointer to the framebuffer

byte* framebuffer = GetFramebuffer(&framebuffer_size);

BodyWrite(bodyhandle, framebuffer, framebuffer_size,...);

SendDownStream(handle, bodyhandle, 0);When the client running in the browser receives the http response from Compute, it will parse out the framebuffer and render it in the browser.

State

Replicating the game loop in this new model requires us to save state somewhere, so that when we call Compute for subsequent frames, we can tell the new instance where we were in the game. I was able to take advantage of the save-load functionality present in the game, which originally provided the player the ability to save the state of the game to disk, and then later reload the game and continue playing where they left off.

I used the same mechanism for state as for the framebuffer: at the end of the game frame, I called into the save system to get a buffer representing the game state, and then I piggybacked that onto the framebuffer when returning the http response to the caller.

// gets a pointer to the framebuffer

byte* resp = GetFramebuffer(&framebuffer_size);

// gets the gamestate, appends it to the framebuffer

resp+fb_size = GetGameState(&state_size);

BodyWrite(bodyhandle, framebuffer, framebuffer_size + state_size,...);

SendDownStream(handle, bodyhandle, 0);Accompanying this change, the client was modified to separate the framebuffer and state and keep the state stored locally, while displaying the framebuffer in the browser. The next time it issues a request to Compute, it will pass the state in the request body, which the Compute instance can then read from the request body and pass into game like so:

BodyRead(bodyhandle, buffer,...);

LoadGameFromBuffer(buffer);At this point, if we run our game frame, it will occur as if it happened the tick after the game state was saved.

Input

The next thing we need is user input so the player can actually play the game! DOOM’s input system is abstracted over the concept of input events. For example, “the player pressed key ‘W,’” or “the player moved the mouse X distance.” We can generate input events in the browser that map to what DOOM expects fairly easily using standard Javascript event listeners:

document.addEventListener(‘keydown’, (event) => {

// save event.keyCode in a form we can send later

});I send these input events along with the state when making the http request to Compute. The instance then parses them into a form we can pass into the game engine before running the frame.

Optimizations

The first working version of this demo ran at ~200ms per round trip. This is not acceptable for an interactive game. Typical games run at 33ms which translates to 30FPS, or 16ms, which translates to 60FPS. Given that latency would be a non-trivial part of our update frequency, I decided that 50ms was a good target to aim for, which is a 4x improvement over the base version.

A number of optimizations I managed to implement were centered around the change from running a continuous game loop to running a single frame. A lot of game systems are designed around the notion that each tick is a delta of the previous frame. The game keeps state that is not captured in the save game but that is used each frame to make decisions. A number of these systems needed tweaking both to ensure functionality worked properly and for performance reasons. A lot of these systems worked best if they were working as if they were not the first frame, at which time a lot of variables and state were initialized.

The game did a number of precomputation on startup, mostly involving trigonometry for doing view space to world space calculations. These precomputed tables also required knowing the screen resolution of the game, which is why they were done at runtime. For my purposes, I was keeping the rendering resolution fixed, and I could just embed the tables into the compiled binary and avoid doing this startup computation every frame.

I managed to get the game running at 50-75ms per tick. There is still work that could be done to bring this closer to what DOOM shipped with, but this proved that we could iterate on a project like this on Compute.

Takeaways

This was my first foray into Compute, and I wasn’t sure what to expect in terms of debugging and iterating. The platform is being actively and continuously improved, and throughout the three weeks I worked on this, I saw improvements take shape, improving deployment reliability and debuggability. Specifically, I want to call out Log Tailing, which allowed me to see prints coming out of DOOM in near real time. When iterating on a fairly opaque C program, especially before I had rendering working, seeing these prints was imperative for debugging problems. Overall, deploying to Compute was similar to working on a traditional video game console, for example.

To be clear, this would not be an ideal solution for running a real-time game requiring game updates at a timely frequency. There are no real advantages to be gained by running a game of this sort in this manner. The purpose of this experiment was to push the boundaries of the platform, to create a compelling demo to discover and showcase possibilities, and to provide inspiration and excitement for the platform. There are surely use cases for video games utilizing this platform, and we are excited to continue investigating ways to make Compute a compelling product for more industries going forward.

If you want to try the demo yourself, we have it running on our Developer Hub. Check it out.